First came text

GPT-2 and other AI (Artificial Intelligence) Transformer models are impressive. After you hand the AI an enormous corpus of text, you give it some text and it continues, generating character after character that make up recognizable, even creative, language maintaining the style. Here’s how https://talktotransformer.com/, an online GPT-2 system, continued when I fed it the words in bold:

“The AI ruined my life” muttered Denise.

“You are the target of someone else’s revenge” replied Cale.

“And you did nothing to prevent it”

“Your words…”

“Yes, that is what I say” smiled Cale.

“So the boys are more than just a valuable asset”

“Yeah”

“But I have things to tell you guys now”

“Huh?”

Cale looked at it. “If we were to adopt our normal lives, we wouldn’t be able to afford everything we want in this world. So for us, we must keep working. It’s this effect of working hard that we were able to obtain everything, even the rare

It’s not simply regurgitating matching phrases that it has ingested. In compressing down the 40 gigabytes of text it was fed into some kind of internal representation in its neural network of only 1.5 billion parameters, it has noticed certain features of what you give it and they trigger… something… in its neural network that riffs on what you provided. Give it the same starting text again and it goes off in a different direction.

“The AI ruined my life” muttered Denise.

“Ha! You’re quite an interesting girl. Like a puppet.”

A sorcerer suddenly appeared next to the table and pointed his wand at Denise, saying “Use your magic!”

A short spell field with dazzling golden lights appeared above Denise’s head and soon a single tablet appeared on the table and began to write. Then the tablet turned around and charged towards the sky with a radiant light.

The tablet charged forwards quickly!

The intensity of the light was frightening. Once it passed the boundary of the spell field, it became all-encompassing. The tablet was so bright that the color seemed to change from a golden to a blue, and not only did it look beautiful,

And again:

“The AI ruined my life” muttered Denise. “I looked over at Bobby, who was sitting with his back against the wall and moving his head side to side. He said nothing. His mouth was hanging open. I then turned back to the door and said, “What the hell is going on?” The door opened and I was met by my father, who had been in hiding for about three weeks and was waiting for me at the door. Bobby was still sitting there, looking over at his father. But in this instance the looks were no longer new. Instead, they had become familiar, like a sick man watching a recently emerged corpse of his loved one. His face turned beet red and he said, “You didn’t make my greatest

Transformer models don’t just continue writing in almost any style. With additional fine-tuning they can recognize a question and answer format, or a simple math problem, or a multiple choice quiz, or a request to summarize, … and continue with the answer to the problem better than most humans. And the newest GPT-3 (eleventy billion parameters in the model! fed a trillion words! gargantuan PDF paper!) can do all these without any fine-tuning! It’s ingested so much text that if you give it one or a few examples of what you want it will figure out what you’re asking for, just as a kid can participate in a made-up game without having to go to classes in that game. The following interaction, getting it to use a made-up word, is amazing to me:

To do a “farduddle” means to jump up and down really fast. An example of a sentence that uses the word farduddle is:

One day when I was playing tag with my little sister, she got really excited and she started doing these crazy farduddles.

It’s “merely” responding to input, but be honest, that’s all you’re doing when someone asks “How are you?” or “What day is it?”

It’s been my hope for decades (my thoughts in 2006, 2010) that some AI would gain enough smarts to understand language, then overnight it would ingest every document on the Internet and be the smartest thing in the world. Instead, AI researchers force-feed a huge subset of the Internet into a language model and it “does language” extremely well without understanding what it’s doing or what it all means.

OK so music…

You can apply a similar approach to music. Train a transformer on the musical note instructions in MIDI files, and then give it some starting parameters, and it can generate further musical instructions. OpenAI built such a system, called MuseNet. Here is what transpired when world-unfamous producer skierpage told MuseNet to improvise in the style of Disney starting from Beethoven’s Für Elise. The piano continues well enough, but then the meth-addled drummer comes in from another planet and goes nuts, and then it ends with a piano flourish. I can’t imagine a human being coming up with this.

OpenAI has now moved on to generating actual waveforms of music with its new system, called Jukebox. I think the main motivation is it can generate someone singing lyrics as well as the instrumental performances. This is crazy. It learns to compress digital music files at 44,000 samples a second down to a much smaller compressed representation that only it understands, and then if you ask for music in some style it creates music in that compressed representation and “blows it back up” into millions of samples making up a musical waveform.

Here’s “Rock, in the style of Elvis Presley.”

It’s weird, like a broken radio tuning into a performance by a rock and roll garage band in love with Elvis but they only heard his songs on their own broken radio. And the AI has learned that Elvis was frequently interrupted by crowd noises and cheering, so after a while it throws that in too.

The lyrics on this are confusing, OpenAI says “All the lyrics below have been co-written by a language model and OpenAI researchers.” But if you want crazy lyrics, someone found Jukebox’s continuations of Rick Astley’s legendary meme song. Jukebox mostly trundles along in that inimitable 80s Stock-Aitken-Waterman style, sometimes adding some novel production ideas or a keyboard solo just like the original producers would mete out new ideas while sticking to the format. But its muffled lyrics include at 1:37 “you wouldn’t get this spaghetti on a guy… Stretch my ![]() “

“![]() . Later Rickbot goes bleak: 1:53 “Kiss the boat Denny I’m Satan’s pirate arrr”, and 3:53 “You know the rules and so you have to die.”

. Later Rickbot goes bleak: 1:53 “Kiss the boat Denny I’m Satan’s pirate arrr”, and 3:53 “You know the rules and so you have to die.”

To stress the same point as the text generator, the AI isn’t simply pasting in bits of music that it has stored matching the starting music. Instead it is calling on the… vague memories/regularities/something… that it has gleaned from ingesting “1.2 million songs (600,000 of which are in English), paired with the corresponding lyrics and metadata from LyricWiki” to produce something new yet familiar. Go browse, for example they asked it for Frank Sinatra and Ella Fitzgerald in front of a small orchestra.

… and why not images

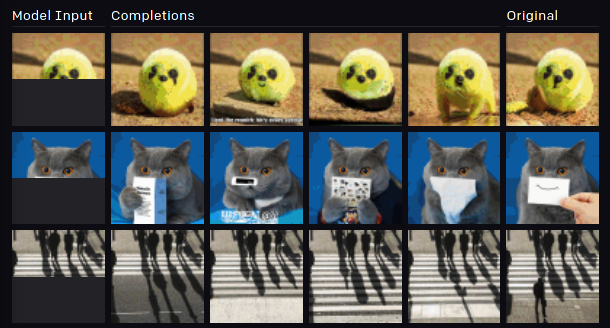

I’ve tried to write this blog post a few times, only to have OpenAI apply transformer AI to a new area. Just today, OpenAI announced a new paper wherein it gets another transformer AI to complete an image. Same idea: give the AI millions of images, don’t tell it anything, then give it the top half of an image and it will produce one pixel value after another that continue the image. Look at it get the cat joke just from a sliver of paper visible in the input (the left column is its input, the right column is the original complete image, the middle four columns are its continuations).

So what does it mean?

These things are crazily impressive. It is rank speciesism to say “That’s not intelligent! It’s just doing something it’s been programmed taught trained fed so much data it recognizes what it should do,” especially when the format of its output is far beyond human capacity – you’ve been trained for years on Real Life but aren’t able to generate the sound of a band and Elvis Presley, or the pixels of a photorealistic image. These AIs are intelligent! And yet… they can’t maintain the plot or a musical idea over the entirety of a short story or a song. So what is it that we do when we create? Somehow we have an outline for the overall structure of the artwork, and fill in along its lines. I’m no expert, but it seems that creativity may be easier to implement than a general intelligence which can deal in concepts and know what words mean. None of these AIs can talk about their work. We can’t ask “What do you find hard? What do you enjoy? What were you aiming for when you went off on that tangent?” They’re sui generis, but the closest analogy seems to be idiots savants.