Hi music fans.

OpenAI’s Jukebox was generating music waveforms from scratch way back in 2020 (as I wrote). Since then, silence from OpenAI. I suspect half the music in Spotify’s “contemplative acoustic music for yoga/Pilates” and “mid-tempo EDM for hip restaurant” playlists is now computer-generated just to screw fleshy musicians out of their tiny streaming royalties.

Meanwhile AI image generation has gone wild with DALL-E, Craiyon, Imagen, Midjourney, Stable Diffusion (as I wrote, with pictures). So, “merely” train an AI to generate spectrograms of music, then turn those images into audio. And just as image generators can make strange videos that morph from one image to the next, have the AI morph the spectrogram into an image of the next musical motif. Two programmers in their spare time did just that. https://www.riffusion.com/about is a clear, entertaining, and to me sensational introduction. The last music sample, “Fantasy ballad, female voice to teen boy pop star” is crazy. Again, this is a sequence of images dreamed up by a computer that sound like music.

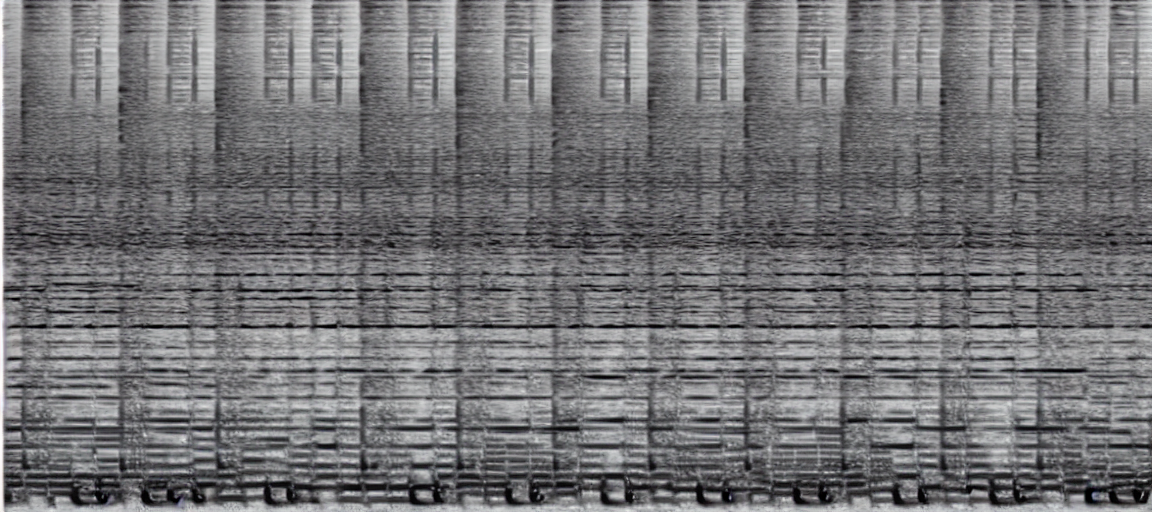

Below is the image https://huggingface.co/spaces/fffiloni/spectrogram-to-music generated (the original Riffusion site is of course overloaded) when I prompted “hard rock electric guitar solo”. You can hear it at https://huggingface.co/spaces/fffiloni/spectrogram-to-music/discussions/28. It’s no Tim Henson , but again this is just a few programmers throwing something together in their off hours. What is creativity? Where does this highway go to? How do I work this? My god, what have we done? (– Talking Heads)